New York Times

Lego Beatles :: You’re Mother Should Know (Innocent Scandinavian Social Values vs. Darth Hollywood and the Lego-Sean Connerys from Hell)

Beatles: Rock Band; Impressive Review

THERE may be no better way to bait a baby boomer than to be anything less than totally reverential about the Beatles. So the news that the lads from Liverpool were taking fresh form in a video game (a video game!) called The Beatles: Rock Band struck some of the band’s acolytes as nothing less than heresy.

Luckily, Paul McCartney and Ringo Starr, along with the widows of George Harrison and John Lennon, seem to understand that the Beatles are not a museum piece, that the band and its message ought never be encased in amber. The Beatles: Rock Band is nothing less than a cultural watershed, one that may prove only slightly less influential than the band’s famous appearance on “The Ed Sullivan Show” in 1964. By reinterpreting an essential symbol of one generation in the medium and technology of another, The Beatles: Rock Band provides a transformative entertainment experience.

In that sense it may be the most important video game yet made.

Never before has a video game had such intergenerational cultural resonance. The weakness of most games is that they are usually devoid of any connection to our actual life and times. There is usually no broader meaning, no greater message, in defeating aliens or zombies, or even in the cognitive gameplay of determining strategy or solving puzzles.

Previous titles in the Rock Band and Guitar Hero series have already done more in recent years to introduce young people to classic rock than all the radio stations in the country. But this new game is special because it so lovingly, meticulously, gloriously showcases the relatively brief career of the most important rock band of all time. The music and lyrics of the Beatles are no less relevant today than they were all those decades ago, and by reimagining the Beatles’ message in the unabashedly modern, interactive, digital form of now, the new game ties together almost 50 years of modern entertainment.

With all due respect to Wii Sports, no video game has ever brought more parents together with their teenage and adult children than The Beatles: Rock Band likely will in the months and years to come.

One Friday evening last month I invited a gaggle of 20-something hipsters (I’m 36) to my apartment in Williamsburg, Brooklyn, to try the game. After 15 minutes one 25-year-old said, “I’m going to have to buy this for my parents this Christmas, aren’t I?” After nine hours we had completed all of the game’s 45 songs in one marathon session. On Saturday afternoon, I woke up to watch a 20-year-old spend three hours mastering the rolling, syncopated drum sequences in “Tomorrow Never Knows.” Thirty-six hours later, near dawn Monday morning, there were still a few happy stragglers in my living room belting out “Back in the U.S.S.R.” Good thing my neighbors were away for the weekend.

I grew up in Woodstock, N.Y., steeped in classic rock, so I had a head start on my younger band mates. (I suspect many parents will enjoy having a similar leg up on their progeny.) Yet I watched the same transformation all weekend long. We would start a song like “Something” or “While My Guitar Gently Weeps,” and as it began, they would say, “Oh yeah, that one.” Then at the end there would literally be a stunned silence before someone would say something unprintable, or simply “Wow” as they fully absorbed the emotional intensity and almost divine melodies of the Beatles.

Not only was the game serving to reintroduce this music, but by leading the players through a schematic version of actually creating the songs, it was also doing so in a much more engaging way than merely listening to a recording. It is an imperfect analogy, but listening to a finished song is perhaps like being served a finished recipe: you know it tastes great even if you have no sense of how it was created.

By contrast, playing a music game like Rock Band is a bit closer to following a recipe yourself or watching a cooking show on television. Sure, the result won’t be of professional caliber (after all, you didn’t go to cooking school, the equivalent of music lessons), but you may have a greater appreciation for the genius who created the dish than the restaurantgoer, because you have attempted it yourself.

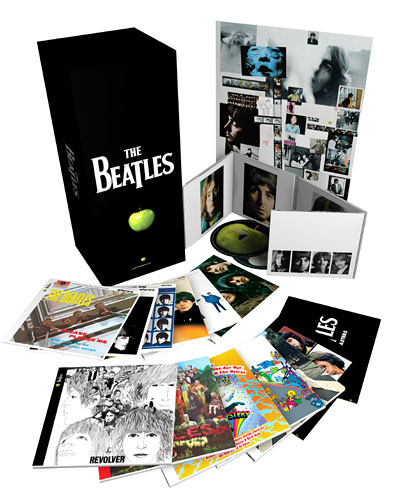

Previous music games have been about collections of songs. The Beatles: Rock Band is about representing and reoffering an entire worldview encapsulated in music. The developers at Harmonix Music Systems have translated the Beatles’ scores and tablature into a form that is accessible while also conveying the visceral rhythm of the music. In its melding of source material and presentation, The Beatles: Rock Band is sheer pleasure. The game is scheduled to be released by MTV Games for the PlayStation 3, Wii and Xbox 360 consoles on Wednesday, the same day the remastered Beatles catalog is slated to be released on CD.

Mechanically it is almost identical to previous Rock Band games. One player sings into a microphone, replacing the original lead vocals, while another plays an electronic drum kit and two more play ersatz guitar and bass. (The new game supports up to two additional singers for a potential maximum of six players.)

In the game’s story line mode, players inhabit the various Beatles as they progress from the Cavern Club to Ed Sullivan’s stage; Shea Stadium; the Budokan in Japan; Abbey Road; and their final appearance on the Apple Corps roof in 1969. Unlike in previous Rock Band games, players are not booed off the virtual stage for a poor performance; rather the screen cuts to a declarative “Song failed” message. Previously unreleased studio chatter provides a soundtrack for some of the menu and credits screens, but there is no direct interaction with avatars of John, Paul, George or Ringo.

The colorful psychedelic dreamscapes used to represent the band’s in-studio explorations are particularly evocative, though they serve mostly to entertain onlookers rather than the players themselves (who will be concentrating on getting the music right rather than looking at the pretty pictures).

Of course almost nothing could be more prosaic than pointing out that playing a music game is not the same as playing a real instrument. Yet there is something about video games that seems to inspire true anger in some older people.

Why is that? Is there still really a fear that a stylized representation of reality detracts from reality itself? In recent centuries every new technology for creating and enjoying music — the phonograph, the electric guitar, the Walkman, MTV, karaoke, the iPod — has been condemned as the potential death of “real” music.

But music is eternal. Each new tool for creating it, and each new technology for experiencing it, only brings the joy of more music to more people. This new game is a fabulous entertainment that will not only introduce the Beatles’ music to a new audience but also will simultaneously bring millions of their less-hidebound parents into gaming. For that its makers are entitled to a deep simultaneous bow, Beatles style.

[From New York Times article “All Together Now: Play the Game, Mom“]

Rapture of the Nerds

The Coming Superbrain

Mountain View, Calif. — It’s summertime and the Terminator is back. A sci-fi movie thrill ride, “Terminator Salvation” comes complete with a malevolent artificial intelligence dubbed Skynet, a military R.&D. project that gained self-awareness and concluded that humans were an irritant — perhaps a bit like athlete’s foot — to be dispatched forthwith.

The notion that a self-aware computing system would emerge spontaneously from the interconnections of billions of computers and computer networks goes back in science fiction at least as far as Arthur C. Clarke’s “Dial F for Frankenstein.” A prescient short story that appeared in 1961, it foretold an ever-more-interconnected telephone network that spontaneously acts like a newborn baby and leads to global chaos as it takes over financial, transportation and military systems.

Today, artificial intelligence, once the preserve of science fiction writers and eccentric computer prodigies, is back in fashion and getting serious attention from NASA and from Silicon Valley companies like Google as well as a new round of start-ups that are designing everything from next-generation search engines to machines that listen or that are capable of walking around in the world. A.I.’s new respectability is turning the spotlight back on the question of where the technology might be heading and, more ominously, perhaps, whether computer intelligence will surpass our own, and how quickly.

The concept of ultrasmart computers — machines with “greater than human intelligence” — was dubbed “The Singularity” in a 1993 paper by the computer scientist and science fiction writer Vernor Vinge. He argued that the acceleration of technological progress had led to “the edge of change comparable to the rise of human life on Earth.” This thesis has long struck a chord here in Silicon Valley.

Artificial intelligence is already used to automate and replace some human functions with computer-driven machines. These machines can see and hear, respond to questions, learn, draw inferences and solve problems. But for the Singulatarians, A.I. refers to machines that will be both self-aware and superhuman in their intelligence, and capable of designing better computers and robots faster than humans can today. Such a shift, they say, would lead to a vast acceleration in technological improvements of all kinds.

The idea is not just the province of science fiction authors; a generation of computer hackers, engineers and programmers have come to believe deeply in the idea of exponential technological change as explained by Gordon Moore, a co-founder of the chip maker Intel.

In 1965, Dr. Moore first described the repeated doubling of the number transistors on silicon chips with each new technology generation, which led to an acceleration in the power of computing. Since then “Moore’s Law” — which is not a law of physics, but rather a description of the rate of industrial change — has come to personify an industry that lives on Internet time, where the Next Big Thing is always just around the corner.

Several years ago the artificial-intelligence pioneer Raymond Kurzweil took the idea one step further in his 2005 book, “The Singularity Is Near: When Humans Transcend Biology.” He sought to expand Moore’s Law to encompass more than just processing power and to simultaneously predict with great precision the arrival of post-human evolution, which he said would occur in 2045.

In Dr. Kurzweil’s telling, rapidly increasing computing power in concert with cyborg humans would then reach a point when machine intelligence not only surpassed human intelligence but took over the process of technological invention, with unpredictable consequences.

Profiled in the documentary “Transcendent Man,” which had its premier last month at the TriBeCa Film Festival, and with his own Singularity movie due later this year, Dr. Kurzweil has become a one-man marketing machine for the concept of post-humanism. He is the co-founder of Singularity University, a school supported by Google that will open in June with a grand goal — to “assemble, educate and inspire a cadre of leaders who strive to understand and facilitate the development of exponentially advancing technologies and apply, focus and guide these tools to address humanity’s grand challenges.”

Not content with the development of superhuman machines, Dr. Kurzweil envisions “uploading,” or the idea that the contents of our brain and thought processes can somehow be translated into a computing environment, making a form of immortality possible — within his lifetime.

That has led to no shortage of raised eyebrows among hard-nosed technologists in the engineering culture here, some of whom describe the Kurzweilian romance with supermachines as a new form of religion.

The science fiction author Ken MacLeod described the idea of the singularity as “the Rapture of the nerds.” Kevin Kelly, an editor at Wired magazine, notes, “People who predict a very utopian future always predict that it is going to happen before they die.”

However, Mr. Kelly himself has not refrained from speculating on where communications and computing technology is heading. He is at work on his own book, “The Technium,” forecasting the emergence of a global brain — the idea that the planet’s interconnected computers might someday act in a coordinated fashion and perhaps exhibit intelligence. He just isn’t certain about how soon an intelligent global brain will arrive.

Others who have observed the increasing power of computing technology are even less sanguine about the future outcome. The computer designer and venture capitalist William Joy, for example, wrote a pessimistic essay in Wired in 2000 that argued that humans are more likely to destroy themselves with their technology than create a utopia assisted by superintelligent machines.

Mr. Joy, a co-founder of Sun Microsystems, still believes that. “I wasn’t saying we would be supplanted by something,” he said. “I think a catastrophe is more likely.”

Moreover, there is a hot debate here over whether such machines might be the “machines of loving grace,” of the Richard Brautigan poem, or something far darker, of the “Terminator” ilk.

“I see the debate over whether we should build these artificial intellects as becoming the dominant political question of the century,” said Hugo de Garis, an Australian artificial-intelligence researcher, who has written a book, “The Artilect War,” that argues that the debate is likely to end in global war.

Concerned about the same potential outcome, the A.I. researcher Eliezer S. Yudkowsky, an employee of the Singularity Institute, has proposed the idea of “friendly artificial intelligence,” an engineering discipline that would seek to ensure that future machines would remain our servants or equals rather than our masters.

Nevertheless, this generation of humans, at least, is perhaps unlikely to need to rush to the barricades. The artificial-intelligence industry has advanced in fits and starts over the past half-century, since the term “artificial intelligence” was coined by the Stanford University computer scientist John McCarthy in 1956. In 1964, when Mr. McCarthy established the Stanford Artificial Intelligence Laboratory, the researchers informed their Pentagon backers that the construction of an artificially intelligent machine would take about a decade. Two decades later, in 1984, that original optimism hit a rough patch, leading to the collapse of a crop of A.I. start-up companies in Silicon Valley, a time known as “the A.I. winter.”

Such reversals have led the veteran Silicon Valley technology forecaster Paul Saffo to proclaim: “never mistake a clear view for a short distance.”

Indeed, despite this high-technology heartland’s deeply held consensus about exponential progress, the worst fate of all for the Valley’s digerati would be to be the generation before the generation that lives to see the singularity.

“Kurzweil will probably die, along with the rest of us not too long before the ‘great dawn,’ ” said Gary Bradski, a Silicon Valley roboticist. “Life’s not fair.”

[A version of this article appeared in print on May 24, 2009, on page WK1 (Week in Review, page 1) of the New York edition (of the New York Times).]